Know Your Agent Starts With Knowing Your Human

Why AI governance will rise or fall on the strength of human identity

Gabriel Steele

For years, digital identity has been framed as a question of authentication: can we confirm that the person logging in is who they claim to be?

That question made perfect sense in a world where humans initiated most digital interactions. Identity was a gateway. Once someone passed through it, backend systems assumed their behaviour, decisions, and authority were legitimate.

That assumption is about to break.

“Morgan Stanley forecast that almost 50% of US online shoppers will use AU agents by 2030. This implies millions of purchase decisions per day will be delegated to agents.”[i]

As AI systems begin to transact, authorize, and act on behalf of individuals and organisations, identity’s centre of gravity is shifting. The critical question is no longer who is this person? Now we must ask: Who authorised this action, under what authority, and is that authority still valid?

The emerging challenge is centred on the governance of agency.

The hidden risk in the agent era

Most of the talk around AI identity focuses on non-human actors. Organisations are debating how to authenticate bots, manage machine credentials, and track automated decision-making.

Those conversations are important, but they are downstream of the real risk. Every AI agent derives its authority from a human identity.

For example: a customer who authorises a financial assistant to move money; an employee who authorises software to access internal systems; a contractor who authorises automation to act on behalf of a business unit; or a citizen who authorises an application to retrieve personal data from a government service. An agent could also create another agent.

In each case, the agent is not the source of authority. It is merely an extension of it. Even when agents create other agents, responsibility never becomes machine-native. Authority always traces back to a human or legal principal.

“This creates a structural reality that many organisations have not yet confronted – if the human identity behind an agent is weak, the agent’s authority is weak.”

For decades, fraud was built around impersonation. If an attacker stole your card, they could make a payment. If they stole your password, they could log in as you. But those attacks were bounded. They gave criminals access to a moment, a session, or a transaction. The agent era changes that equation.

If someone gains control of the software trusted to act on your behalf, they do not just impersonate you once. They inherit the authority you have already delegated. They may be able to initiate actions continuously, quietly, and at scale.

The risk shifts from fraudster impersonation at login to hijacking the digital authority already acting in your name.

And in many organisations today, the binding of identity is far shakier than leaders assume.

We are delegating power from identities we barely verified

For two decades, identity programs have optimised for onboarding and login convenience. Password resets, SMS recovery, lightweight identity proofing, and fragmented lifecycle controls were tolerated because the risk model assumed that a human would remain in the loop.

But AI changes the speed, scale, and persistence of action.

When software can execute thousands of transactions, initiate decisions continuously, or operate across systems without friction, weak identity assurance becomes a systemic control failure.

We are on the verge of allowing autonomous software to act with the authority of identities that were verified once, often imperfectly, and rarely reassessed.

That represents a major authorisation issue.

The chain of authority organisations must now manage

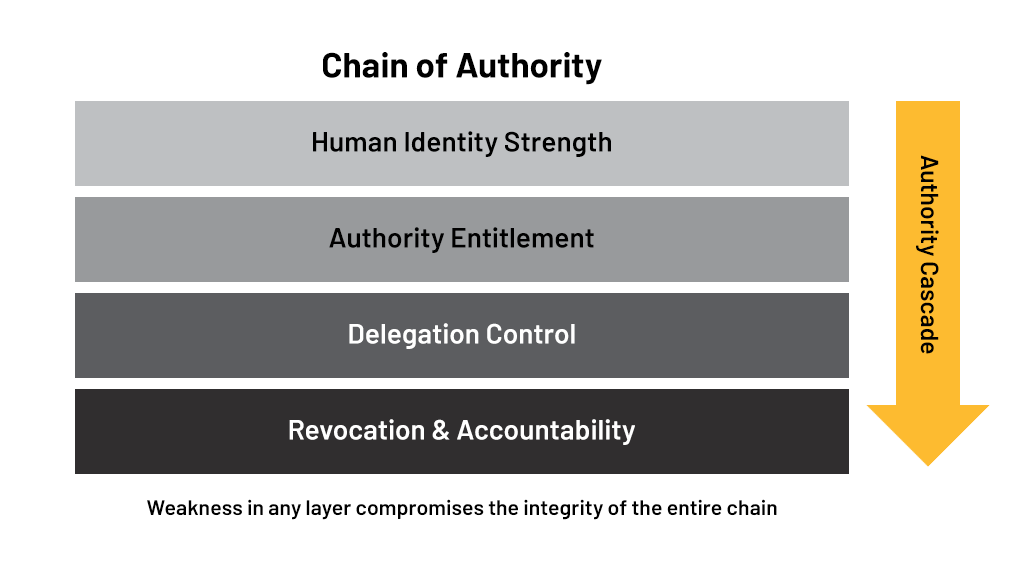

In an agent-driven world, identity is no longer a single checkpoint. It becomes a chain of authority that must hold together from origin to action.

That chain has four critical links.

- The first is human identity strength. Organisations must be confident that the individual granting authority is real, legitimate, and appropriately bound to their digital presence. If this link fails, every downstream control is compromised.

- The second is authority entitlement. Even if the identity is real, the organisation must know whether that person had the right to delegate the action in question. Being authenticated does not automatically imply permission.

- The third is delegation control. Authority must be scoped. What exactly was the agent allowed to do? For how long? Under what conditions? Without clear boundaries, delegation turns into uncontrolled access.

- The fourth is revocation and accountability. Organisations must be able to trace actions back to their source, withdraw authority when circumstances change, and demonstrate oversight. Without this, delegation becomes irreversible and opaque.

Most organisations today are investing heavily in the third link – defining permissions and managing access policies. But the first and fourth links, identity strength and revocation governance, remain dangerously underdeveloped.

Regulators will focus on authority, not algorithms

As AI oversight frameworks emerge globally, many organisations assume scrutiny will centre on model transparency, bias, or explainability. Those issues matter, but they are not where enforcement will begin.

Regulators historically intervene where accountability is unclear and risk becomes systemic. In the agent era, that accountability will centre on authority.

They will likely ask “Who authorised this action?”, “Was that authority valid at the time?”, “Could it have been withdrawn?”, “Was the identity strong enough to justify delegation?”, and “Can responsibility be traced?”

These are identity questions, not AI questions.

Organisations that treat identity as a login function will struggle to answer them. Those that treat identity as a lifecycle control system will be far better prepared.

Identity is expanding from verification to control of agency

This shift changes the role identity plays inside organisations. Identity is no longer just about enabling access. It becomes the mechanism that governs who – or what – is allowed to act.

“That means identity must extend beyond authentication events and into continuous assurance. It must bind people to their actions over time, not just at the moment of login. It must support delegation without losing accountability. And it must allow authority to be revoked as easily as it is granted.”

In other words, identity becomes infrastructure for managing agency itself.

Why this matters now

The agent future is arriving unevenly, but it is arriving quickly. Organisations are already deploying automation that can initiate payments, access sensitive data, or make operational decisions. The convenience is immediate. The governance implications are only beginning to surface.

The risk is not that AI will act independently of humans. The risk is that it will act very effectively on behalf of identities we never properly secured.

If that happens, the industry will discover that the true foundation of AI governance was never machine identity. It was always human identity strength.

The path forward

The organisations that navigate this shift successfully will not be the ones that build the most sophisticated AI controls. They will be the ones that ensure every delegated action rests on a strong, traceable, and revocable identity foundation.

Authority begins with knowing your human.

[i] https://www.businessinsider.com/morgan-stanley-expects-ai-agents-to-fuel-e-commerce-boom-2025-11